The Deployment Paradox: How AI and Complex Systems Actually Reach Markets

You need trust to deploy, but you need deployment to earn trust. How to resolve the circular dependency.

Since 2008, Spotify has grown from 6 million to 600+ million users through patient geographic expansion and gradual catalog building. Each new market taught them about licensing, each user cohort revealed listening patterns. The vision was revolutionary (replace music ownership with streaming), but the execution was evolutionary. This combination of bold vision and gradual deployment built a $60-billion business.

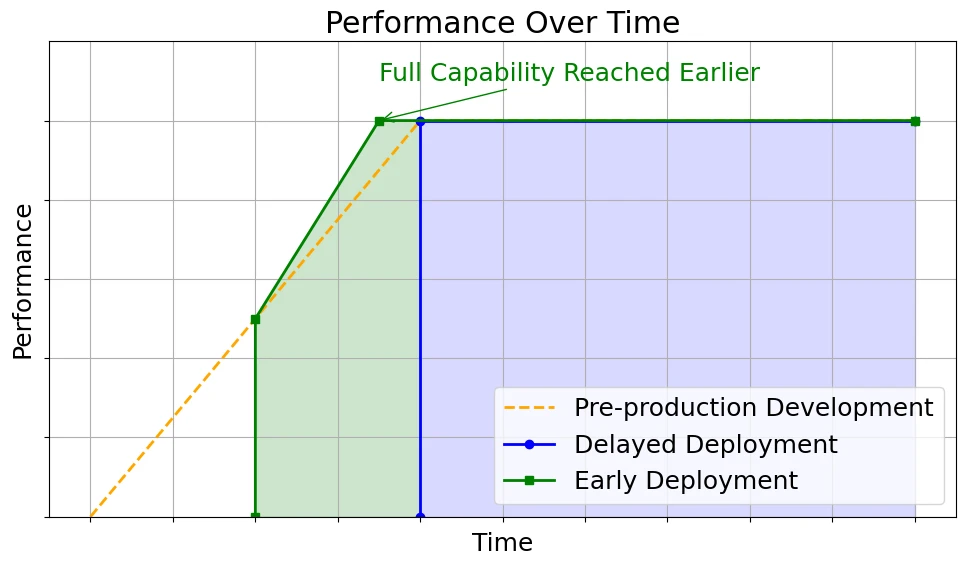

Most enduring technologies follow this pattern. They rarely succeed through single, spectacular leaps but through iterative feedback loops that teach founders and users how to adapt to one another. Revolutionary deployments (following step functions) sometimes succeed spectacularly: the iPhone eliminated keyboards overnight, ChatGPT reached 100 million users in two months.

The rule is ramp functions that build capability, trust, and understanding over time, not step functions.

AI pushes this pattern to its extreme. You must deploy before you’re ready, because readiness itself can only be learned through deployment. Yet you’re asking users to trust a system that will fail them sometimes, in ways you can’t fully predict beforehand. Each release is both a product launch and a social experiment in trust calibration.

As AI systems shift from tools that suggest to agents that act, the stakes multiply. A writing assistant that suggests an edit can be ignored. A trading or scheduling agent that executes autonomously can spend money, commit resources, or damage relationships. When models call models, errors cascade. When agents make decisions, accountability blurs. Each layer of autonomy compounds the uncertainty.

This creates a unique strategic dilemma. You need to expose the system to the world to learn its real behaviour, but the very exposure that generates learning also exposes users to risk. The tighter the feedback loops you need for improvement, the higher the potential cost of early errors. And the higher the stakes, the more reluctant users become to participate in that learning process.

This tension sits at the heart of what we call the Deployment Paradox.

The Deployment Paradox Defined

Here’s the paradox: You need trust to deploy, but you need deployment to earn trust.

The data from real usage enables model improvement and product refinement, yet you can’t access that data without first convincing users to engage. Trust, deployment, and quality form a circular dependency.

In traditional software, this loop doesn’t exist. Deterministic systems produce consistent outputs (input X yields output Y), thus trust is binary: it works or it doesn’t. AI systems are probabilistic. The same input can yield multiple outcomes, and even an 85-percent accurate system fails 15 percent of the time. Users must learn when to trust, when to verify, and when to ignore. That intuition can’t be engineered in advance; it requires experience across many interactions in diverse contexts.

Autonomy then amplifies the problem. The further AI moves from assisting to acting, the more its failures carry material consequences. Wrong suggestions can be dismissed; wrong actions cost money, time and reputation. Networks of agents interacting with each other multiply both complexity and uncertainty.

The paradox intensifies in high-stakes domains (legal, medical, financial), where trust is needed before deployment. These are precisely the fields where learning through mistakes is least tolerable, and yet where deployment-based learning is most required.

That’s why the central question in AI strategy isn’t “when is the technology ready?” but “how do we deploy imperfect AI that will make it ready?”

Why Complex Systems Emerge Through Iteration

In 1992, Mitchell Waldrop published “Complexity: The Emerging Science at the Edge of Order and Chaos,” documenting how scientists discovered a shared principle: complex systems cannot be designed top-down or deployed all at once. They must evolve through feedback loops, adaptation, and time.

Crucially, we must distinguish complex systems from merely complicated ones. The Panama Canal is complicated (many interdependent parts) but not complex: its behaviour is deterministic and fully specifiable. Markets and AI systems are complex: behaviour emerges from countless interactions that cannot be fully predicted. Revolutionary deployment works for complicated systems where outcomes are specifiable, but fails for complex ones where outcomes must emerge through use.

Three converging insights explain why:

Innovation Moves Through Stepping Stones (Kauffman’s “Adjacent Possible”): Stuart Kauffman showed that life couldn’t leap from simple molecules to DNA overnight; each reaction only made nearby reactions possible. The same law governs technology.

Even when breakthroughs seem sudden (penicillin, the transistor, CRISPR), their deployment required enabling conditions already in place. The iPhone felt revolutionary but depended on a web of mature adjacencies: capacitive touchscreens, ARM processors, 3G networks, lithium batteries. Without them, it would have been impossible in 1995.

Operational takeaway: You can’t deploy into non-existent adjacencies. Each product release must prepare the substrate (data pipelines, infrastructure, user understanding) that makes the next adjacency reachable.

Systems Balance Structure and Fluidity (Langton’s “Edge of Chaos”): Chris Langton demonstrated that adaptive systems survive in a narrow zone between rigidity and randomness. Too rigid, and they stagnate; too chaotic, and they disintegrate.

Operational takeaway: Design deployments with a stable core (APIs, mental models) and flexible periphery (features, models, UI). Users can adapt when structure anchors change.

Complexity Emerges From Iteration (Holland’s Genetic Algorithms): John Holland showed that complex behaviour can’t be pre-specified; it emerges through variation, selection, and heredity across iterations. Each generation encodes what worked before, producing designs no human could have planned in advance.

Operational takeaway: Complexity isn’t delivered; it’s discovered through iteration. Each deployment tests an assumption, and the tighter the feedback and inheritance loops, the faster capability compounds.

Trust Accumulation: The Psychological Shifts

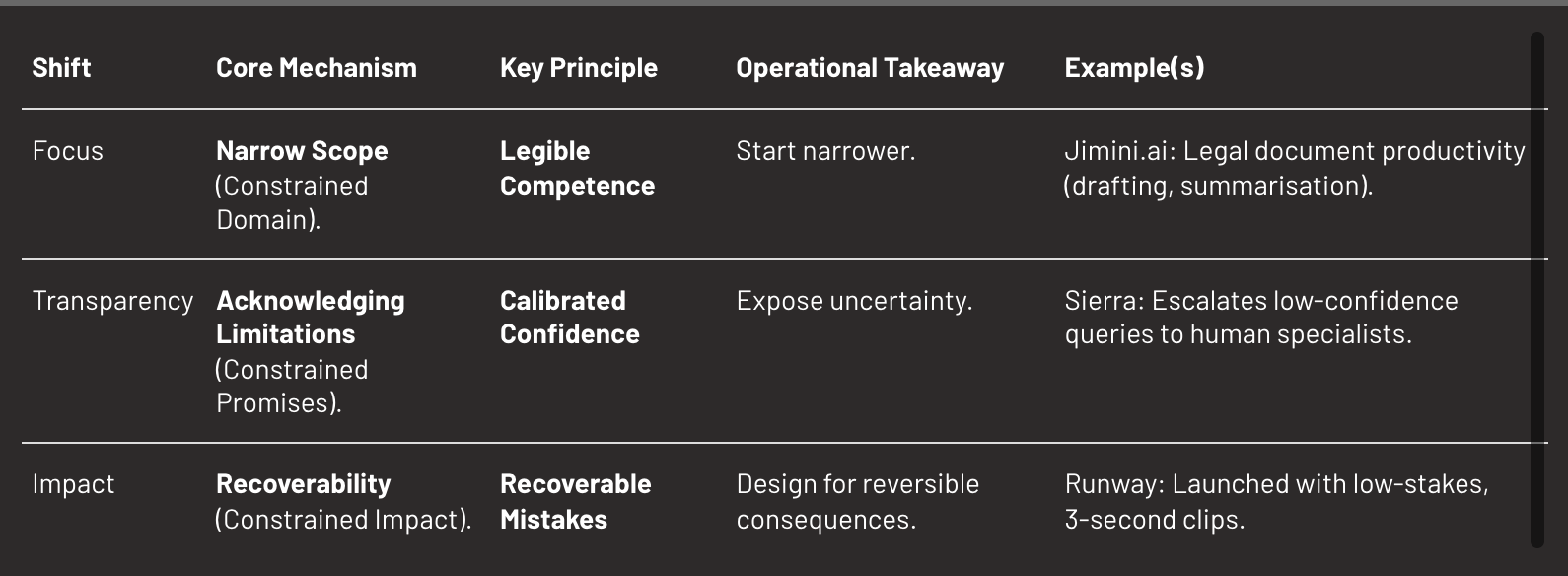

The paradox resolves through strategic constraint design. If you can’t deploy without trust, constrain what must be trusted: limit scope, clarify boundaries, and make mistakes survivable.

AI systems require two kinds of trust simultaneously:

- Accumulation: belief that the system works reliably across time.

- Calibration: intuition about when to trust specific outputs.

Traditional software needs only accumulation (it either works or doesn’t). AI needs both, and both emerge only through interaction. Effective deployment builds them sequentially through three shifts.

Shift 1: From Skepticism to Confidence (Narrow Success)

When an AI performs consistently in a constrained domain, users infer its underlying competence. Empirical reliability replaces abstract promises.

Jimini.ai exemplifies this in legal AI. Rather than claiming general legal capability, they focused narrowly on document productivity: drafting, summarisation, extraction. Every AI suggestion includes citations showing exactly which document sections support each claim. Lawyers verify sources instantly, turning traceability into trust. The narrow focus creates a learning loop: the AI learns lawyers’ terminology and reasoning patterns, improving relevance, which drives more usage and richer training data.

Operational takeaway: Start narrower than feels comfortable. Domain precision makes reliability legible.

Shift 2: From Opacity to Calibration (Transparent Boundaries)

Acknowledging what your system cannot do increases trust more than emphasising capabilities. Signaling self-awareness implies reliability under uncertainty.

Sierra, the AI customer service platform valued at $10 billion, uses a multi-model architecture that monitors confidence in real time. High confidence (account queries, order tracking): AI handles fully. Low confidence (complex complaints, nuanced policy, emotional situations): the system explicitly escalates to specialists. This transparency signals calibrated capability.

Research validates this counterintuitive insight. Teams at DeepMind and Inflection discovered through deployment experience that users don’t need AI to hide its failures: they need AI to be useful despite them.

Operational takeaway: Expose uncertainty instead of concealing it. Users calibrate through visibility, not perfection.

Shift 3: From Critic to Collaborator (Recoverable Mistakes)

In early deployment, every error is data, but only if it doesn’t destroy trust. Gradual, low-stakes contexts make mistakes recoverable, and turn users into co-learners.

Runway, the AI video generation platform valued at $3 billion, launched with constraints that made errors recoverable. Rather than promising production-quality output, they started with 3-second clips, basic tools, simple edits. When outputs missed the mark, creators regenerated clips or refined prompts. Low stakes (music videos, social content, VFX tests) meant errors rarely blocked production.

This created a dynamic where users became collaborators. The constrained outputs meant mistakes had limited blast radius. This recoverability gave Runway permission to expand progressively: from 3-second clips to 10+ second videos, from simple edits to full scene generation, from individual creators to major studio partnerships with Lionsgate.

Operational takeaway: Design for reversible consequences. Low-cost errors fuel compounding insight.

The Compound Effect: Why Sequential Trust Beats Simultaneous Trust

These mechanisms don’t operate independently; they compound through reinforcing dynamics:

Narrow success makes users receptive to transparency about limitations. When users see the system excel in a constrained domain, they develop confidence in its underlying capability. This confidence makes them willing to accept stated limitations in adjacent areas. Without the initial narrow success, limitation acknowledgment reads as excuse-making. With it, limitation acknowledgment reads as honest calibration.

Transparency about limitations makes mistakes recoverable. When users understand a system’s boundaries, errors within those boundaries don’t destroy trust, they confirm the system’s self-awareness.

Recoverable mistakes enable expansion to adjacent domains. When errors generate learning rather than abandonment, teams can progressively expand scope with user confidence intact.

The compounding creates trust that expands across progressively broader capability: narrow success (linear trust in one domain) plus transparency (extends trust to adjacent assessment) plus recoverable mistakes (maintains trust through errors) equals expanding confidence.

Revolutionary launch tries to build all trust simultaneously: competence across all domains, transparency about all capabilities, recovery from all mistakes. This simultaneous trust requirement explains why revolutionary launches fail more often than their capabilities would predict. The product might be technically excellent but trust-deficient because users haven’t had time to develop confidence through experience.

Gradual deployment sequences trust building: first narrow competence (prove underlying quality), then transparent boundaries (prove calibrated self-awareness), then recovery confidence (prove learning from errors). Each stage enables the next. The sequence creates compound trust that revolutionary launch cannot replicate.

Conclusion

Understanding why gradual deployment works doesn’t tell you how to execute it within your specific constraints. Every AI deployment faces a unique constraint profile that determines which strategies are viable. Some systems can iterate daily. Others face months between experiments. Some failures affect only your team. Others cascade through ecosystems.

The deployment paradox resolves when you match strategy to constraints. Theory illuminates what’s possible. Constraint diagnosis reveals what’s viable. Strategic execution determines what succeeds.